Planet Python

Last update: June 10, 2026 04:43 PM UTC

June 10, 2026

Python Morsels

Stacks and queues in Python

Use a Python list for stack operations (last-in, first-out) and a deque from the collections module for queue operations (first-in, first-out).

Table of contents

Stacks versus Queues

In Computer Science, stacks and queues are data structures that are optimized to make it inexpensive to remove either the most recently added item or the least recently added item.

A queue is often called a FIFO data structure: first in, first out.

You can think of a queue as... well, a queue. Or a "line", for Americans like me. The first person to enter a queue will be the first person to reach the front of the queue.

And in programming queues, the first item added will be the first item removed.

A stack is often called a LIFO data structure: last in, first out.

You can think of a stack as a stack of plates... specifically one of those spring-loaded ones from a self-service lunch counter. The last plate that's added to the top of the stack will be the first plate removed from the top of the stack.

And in programming stacks, the last item added will be the first item that's removed.

But how do these terms apply to Python?

Stacks in Python

You can think of Python …

Read the full article: https://www.pythonmorsels.com/stacks-and-queues/

Real Python

Cursor vs Windsurf: Which AI Code Editor Is Best for Python?

AI-powered code editors have moved beyond novelty to become everyday tools for many Python developers. Instead of having to switch between your editor and a separate AI chat, you can use tools like Cursor and Windsurf that bring AI directly into your workflow. As a result, the Cursor vs Windsurf question is a common one for developers deciding which to adopt.

Both Cursor and Windsurf are VS Code forks that import your keybindings, themes, and Python extensions, and both run the same frontier models. They look similar at first but diverge in how they handle changes as you build.

Cursor focuses on control, surfacing AI-generated edits as reviewable diffs and relying on explicit rules to guide agent behavior. Windsurf focuses on flow, applying edits directly in the editor while using broader workspace context, including terminal output, recent edits, and conversation history, to shape its behavior.

In this tutorial, you’ll compare both editors across:

- AI code completion: How each editor’s completion system behaves and what context it draws on

- Agentic multi-file editing: How each editor handles tasks involving multiple files, directories, and the terminal

- Debugging and error correction: How each editor reviews generated code and integrates with your linter

By the end, you’ll have a clear picture of which editor fits your Python workflow. If you’re coming from VS Code, the Python Development in Visual Studio Code tutorial covers the baseline configuration that carries over to both forks.

The table below helps you choose the right editor at a glance:

| Use case | Cursor | Windsurf |

|---|---|---|

| You want AI-generated changes shown as reviewable diffs before they’re written to your files, guided by explicit rules | ✅ | — |

| You want edits applied directly as the agent works, using a broader workspace context (terminal output, recent edits, conversation history, and memory) | — | ✅ |

Cursor is the better fit if you want to review changes before they’re applied. Windsurf is the better fit if you prefer the agent to apply edits directly in your files as it works, drawing on the broader workspace context. To see how this plays out in completion, context management, and debugging, read on.

Get Your Code: Click here to download the free sample code for the resilient HTTP client you’ll build with Cursor and Windsurf in this tutorial.

Take the Quiz: Test your knowledge with our interactive “Cursor vs Windsurf: Which AI Code Editor Is Best for Python?” quiz. You’ll receive a score upon completion to help you track your learning progress:

Interactive Quiz

Cursor vs Windsurf: Which AI Code Editor Is Best for Python?Test your understanding of how Cursor and Windsurf compare for Python across AI completion, agentic edits, and debugging workflows.

Metrics Comparison: Cursor vs Windsurf

As you work through the hands-on sections and eventually bring either editor into your own Python projects, the table below gives you a quick reference for some key differences you might expect from each tool:

| Metric | Cursor | Windsurf |

|---|---|---|

| IDE support | Standalone VS Code fork plus a JetBrains plugin | Standalone VS Code fork plus plugins for JetBrains IDEs, Vim, Neovim, Xcode, Visual Studio, and more |

| AI code completion | Fast, line-by-line prediction; strong on single-file typed structures | Slower but more structurally aware across interconnected files |

| Startup performance | Faster. Uses lightweight text search that requires no upfront project indexing. | Slower initial response. Builds a semantic map of your project structure before it begins. |

| Debugging performance | Identifies and fixes the root cause in one pass | Reaches passing tests by working around the root cause over multiple iterations |

| Resource impact | Light. Low background CPU and RAM usage. | Heavy. Background indexing can spike local CPU during initial project load. |

| Billing model | Monthly credit pool with unlimited Tab and Auto mode on Pro | Daily and weekly usage quotas that refresh automatically on a schedule |

| Pro plan pricing | $20/month | $20/month |

| Ideal project size | Small to medium codebases where you already know the structure and can target files manually | Large, highly interconnected codebases that benefit from its RAG-based context engine and automatic semantic indexing |

In the next sections, you’ll build a resilient HTTP client in Python from scratch and then send the same prompts to both editors to compare their responses.

Getting Started: Installation

Both editors ship as standalone desktop applications that closely match the VS Code experience. On first launch, they offer to import your local VS Code configuration, copying your keybindings, extensions, themes, and settings so your environment carries over with minimal setup.

To follow the hands-on project later in this tutorial, you’ll also want Python 3.12 or later installed on your system. Beyond that, if you need a full VS Code baseline before starting, the Python Development in Visual Studio Code (Setup Guide) course covers the editor setup from scratch.

Both Cursor and Windsurf offer free plans with enough model access to work through this comparison, though keep in mind that free-tier usage is limited and may run out under heavy use.

Installing Cursor

Head to the Cursor download page and download the correct version for your system. During setup, Cursor offers to import your VS Code configuration, including extensions, keybindings, and themes, so your environment carries over with minimal setup.

Once the editor opens, you’re ready to go. You don’t need to configure anything else yet.

If Cursor is new to you, Real Python’s video course on Tips for Using the AI Coding Editor Cursor covers setup, Agent mode, Plan mode, and model selection in a practical context, making the comparisons later in this tutorial easier to follow.

Installing Windsurf

Download Windsurf from the Windsurf download page and run the installer. The VS Code profile import works identically to Cursor’s.

Read the full article at https://realpython.com/cursor-vs-windsurf-python/ »

[ Improve Your Python With 🐍 Python Tricks 💌 – Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

Python GUIs

How to Set Row Background Colors in a QTableView — Use Qt's BackgroundRole to color entire rows based on your data

I have a QTableView table showing some data about connected devices. How can I highlight rows to give a visual indicator of the current status of the device?

When you're working with a QTableView and a custom model, it's common to want to highlight entire rows based on some condition in your data. For example, you might want to color a row blue when a device has a connected status, or red when something has gone wrong.

Understanding How data() Works

In Qt's Model/View architecture, the view calls your model's data() method for every cell in the table — and for each cell, it asks about multiple roles. One of those roles is Qt.BackgroundRole, which tells the view what background color to use for that cell.

The view asks for Qt.BackgroundRole on every single cell, not just one column. So if your data() method returns a color for Qt.BackgroundRole based on the row data (ignoring the column), the color will be applied to every cell in that row.

Let's build a working example.

A Complete Working Example

Here's a full example you can run directly. It creates a QTableView with colored rows based on the PRESENT_STATUS field in each row of data:

import sys

from typing import Union

from PyQt6.QtCore import QAbstractTableModel, QModelIndex, Qt

from PyQt6.QtGui import QColor

from PyQt6.QtWidgets import QApplication, QMainWindow, QTableView

class TableModel(QAbstractTableModel):

def __init__(self, data: Union[list, None] = None):

super().__init__()

self._data = data or []

self._hdr = self._gen_hdr_data() if data else []

self._base_color = {

"NewConnection": QColor("blue"),

"Registered": QColor("green"),

}

def _gen_hdr_data(self):

"""Build a sorted list of all unique keys across all row dicts."""

all_keys = set()

for d in self._data:

all_keys.update(d.keys())

return sorted(all_keys)

def rowCount(self, parent=QModelIndex()):

return len(self._data)

def columnCount(self, parent=QModelIndex()):

return len(self._hdr)

def headerData(self, section, orientation, role):

if role == Qt.DisplayRole and orientation == Qt.Horizontal:

return self._hdr[section]

def data(self, index: QModelIndex, role: int):

if not index.isValid():

return None

row_dict = self._data[index.row()]

state = row_dict.get("PRESENT_STATUS", "")

if role == Qt.DisplayRole:

col_key = self._hdr[index.column()]

value = row_dict.get(col_key, "")

return str(value) if value else ""

if role == Qt.BackgroundRole:

color = self._base_color.get(state)

if color:

return color

return None

class MainWindow(QMainWindow):

def __init__(self):

super().__init__()

self.setWindowTitle("Row Background Colors in QTableView")

data = [

{"IP": "192.168.1.10", "PRESENT_STATUS": "NewConnection"},

{"IP": "192.168.1.108", "FORMER_STATUS": "NewConnection",

"PRESENT_STATUS": "Registered"},

{"IP": "192.168.1.50", "PRESENT_STATUS": "Unknown"},

]

self.table = QTableView()

model = TableModel(data)

self.table.setModel(model)

self.setCentralWidget(self.table)

app = QApplication(sys.argv)

window = MainWindow()

window.show()

app.exec()

The method that Qt calls on the model is called data, so in the example above, the list is stored as self._data (with a leading underscore) to avoid this.

Run this and you'll see three rows. The first row ("NewConnection") has a blue background, the second row ("Registered") has a green background, and the third row ("Unknown") has no special coloring because it isn't in the _base_color dictionary.

How Colors are Set on Rows

To understand how the color is being set to the entire row, take a look at the Qt.BackgroundRole section of data():

if role == Qt.BackgroundRole:

color = self._base_color.get(state)

if color:

return color

Notice that index.column() isn't used here at all. The color decision is based entirely on the row's PRESENT_STATUS value. Since the view calls data() for every cell in the row — column 0, column 1, column 2, etc. — and each call gets the same color back, the entire row ends up painted.

If you only wanted to color a specific column (say, just the status column), you would add a column check:

if role == Qt.BackgroundRole:

# Only color the PRESENT_STATUS column

if self._hdr[index.column()] == "PRESENT_STATUS":

color = self._base_color.get(state)

if color:

return color

Making the Text Readable

One thing you'll notice with a dark background color like blue is that the default black text becomes hard to read. You can fix this by also handling Qt.ForegroundRole and returning a light text color when the background is dark:

def data(self, index: QModelIndex, role: int):

if not index.isValid():

return None

row_dict = self._data[index.row()]

state = row_dict.get("PRESENT_STATUS", "")

if role == Qt.DisplayRole:

col_key = self._hdr[index.column()]

value = row_dict.get(col_key, "")

return str(value) if value else ""

if role == Qt.BackgroundRole:

color = self._base_color.get(state)

if color:

return color

if role == Qt.ForegroundRole:

# If this row has a background color, use white text.

if state in self._base_color:

return QColor("white")

return None

Now blue and green rows will have white text, making everything easy to read.

Updating Colors Dynamically

If your data changes at runtime — for example, a device's status changes from "NewConnection" to "Registered" — you need to tell the view that something has changed so it repaints. You do this by emitting the dataChanged signal:

def update_status(self, row, new_status):

self._data[row]["PRESENT_STATUS"] = new_status

# Emit dataChanged for the entire row.

top_left = self.index(row, 0)

bottom_right = self.index(row, self.columnCount() - 1)

self.dataChanged.emit(top_left, bottom_right)

This tells the view to re-query data() for every cell in that row, which picks up both the new display text and the new background color. For a deeper look at how signals work to keep your model and view in sync, see Signals, Slots & Events.

Summary

Once you understand how the model's data() method works, coloring entire rows in a QTableView is relatively straightforward. The view asks for each role on every cell, so returning a color from Qt.BackgroundRole based on row-level data — without filtering by column — naturally paints the whole row. Pair that with Qt.ForegroundRole for readable text, and you've got a clean, data-driven way to highlight rows in your table.

To learn more about using QTableView with custom models and data from numpy or pandas, see the QTableView with numpy and pandas tutorial. If you want to add sorting and filtering to your table, take a look at Sorting and Filtering Tables.

For an in-depth guide to building Python GUIs with PyQt6 see my book, Create GUI Applications with Python & Qt6.

Python Insider

Python 3.14.6 and 3.13.14 are now available!

A pair of bug fix releases await your upgrade.

Seth Michael Larson

Are insecure code completions a vulnerability?

Three months ago I saw that PyCharm shipped with a

“Full Line Completion” plugin that “uses a local deep

learning model to suggest entire lines of code”. These

suggestions manifest as whole-line suggestions after

you start typing and can be accepted with Tab. Essentially

auto-complete for entire lines.

I decide to test this functionality. I started by

writing import urllib3, created a new line,

and then typed u and received a suggested completion for the line

marked below with a

dashed border.

I was not impressed by the result:

import urllib3

urllib3.disable_warnings(urllib3.exceptions.InsecureRequestWarning)

Accepting this line would mean that any insecure

requests made with urllib3 would not result in a user-visible warning.

I didn't accept this suggestion and then began to instantiate a

urllib3.PoolManager and what I feared would come next was confirmed:

import urllib3

urllib3.PoolManager(

cert_reqs='CERT_NONE',

The suggestion offered to disable certificate verification (CERT_NONE) which

would make every request made by the PoolManager susceptible to

monster-in-the-middle (MITM) attacks. Accepting this code as-is would

mean the program I am writing has a severe vulnerability. If I

had accepted the prior suggestion too, then urllib3 would

have no chance to warn the user about this mistake prior

to productionizing this code.

Clearly something insecure is going on here, but for a CVE to be assigned we have to decide which software component is vulnerable. Does this behavior warrant a CVE at all? I am not sure which is unfortunate, without a security-angle to a bug report companies are less likely to prioritize reports.

I reported this behavior to JetBrains for “Full Line Code Completion” v253.29346.142 and clearly their support staff weren't certain whether this defect was a security vulnerability or not either. When I asked to publish a blog post about this behavior after they confirmed this report wasn’t a “direct security vulnerability” (which I agree with) but then was asked not to publicize my report and referred to PyCharm’s Coordinated Disclosure Policy so... which is it? Security vulnerability or not?

I ended up waiting the 90 days anyway and I didn't hear back with any substantive update from the development team. I double-checked again today using “Full Line Code Completion” v261.24374.152 and the behavior is identical, suggesting the same insecure code for both contexts.

This isn’t meant to be a specific dig at PyCharm or JetBrains, I have no-doubt that examples like this exist in every code generation model available. I don’t think using CVEs for this purpose is appropriate or helpful for users, either. But not prioritizing and addressing this behavior at the source means more work to mitigate the potential for insecure code to be accepted by users who are trusting what is offered to them by their IDE.

What do you think? I am interested in knowing your thoughts about this specific class of issue with code generation models.

Thanks for reading ♥ I would love to hear your thoughts! Contact me via Mastodon, Bluesky, or email. Browse the blog archive. Check out my blogroll.

Armin Ronacher

Gaslighting Openness

I have been a staunch supporter of Open Source for a long time, including experiments in funding it. I’m a true believer in the idea that Open Source always wins in the long run, but not automatically and not quickly. Right now it is being stressed by AI slop, shifting contributor dynamics, the falling cost of producing code, and large companies learning to close doors behind them.

A lot of that battle today is manipulation of the narrative. Opinion makers on social media and in business circles increasingly frame access as irresponsibility. That is why the EU’s DMA matters, even if many people (including myself) reflexively hate EU regulation. Apple’s fight over delayed AI features in Europe is not about Brussels being annoying: it is about whether users can access their own devices and data. The phone is yours, the data is yours, yet Apple decides who may reach it and takes the agency away from you and then tries to make that sound like it is in your interest (supposedly it’s for your safety and security).

The closer you get to the core of AI, the more this shows up. Anthropic has every financial incentive to restrict what people can do with Mythos and Fable, and they wrap those restrictions in safety and (national) security language. Some restrictions may be defensible, but not all of them are. They trained their models on public works, then block Open Source attempts to learn from and distill these systems.

Disliking the EU, China, or any other large government should not make us forget that true democratized access to technology including AI is in all our interest. Some temporary product pain, including delayed Apple AI features, will be worth paying if it keeps gates open. We should not let companies own the narrative that preventing access is in our interest, particularly not as Europeans where the odds are already stacked against us by our underdeveloped capital markets, brain drain and internal fighting.

June 09, 2026

PyCoder’s Weekly

Issue #738: sleep(), Polars Workflows, Iterators, and More (2026-06-09)

#738 – JUNE 9, 2026

View in Browser »

Python sleep(): How to Add Time Delays to Your Code

Learn how to use Python’s sleep() function to add time delays and pause your code with time.sleep(), decorators, threads, and asyncio.

REAL PYTHON

Libraries for Your Python Polars Workflows

Four excellent libraries for your data science workflow with support for Polars DataFrames

ISABELLA VELÁSQUEZ • Shared by Isabella Velásquez

B2B AI Agent Auth Support

Your users are asking if they can connect their AI agent to your product, but you want to make sure they can do it safely and securely. PropelAuth makes that possible →

PROPELAUTH sponsor

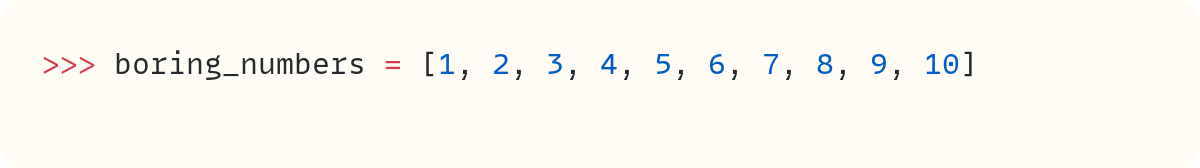

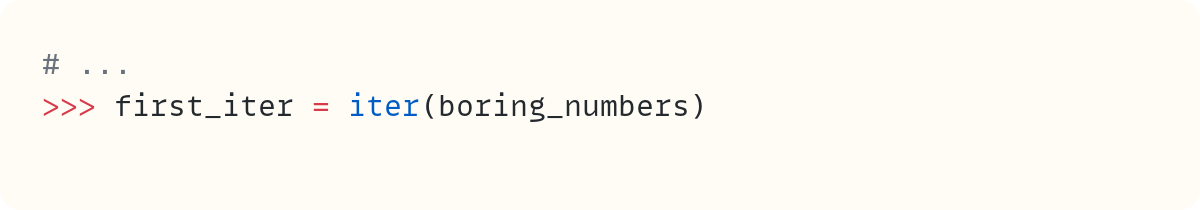

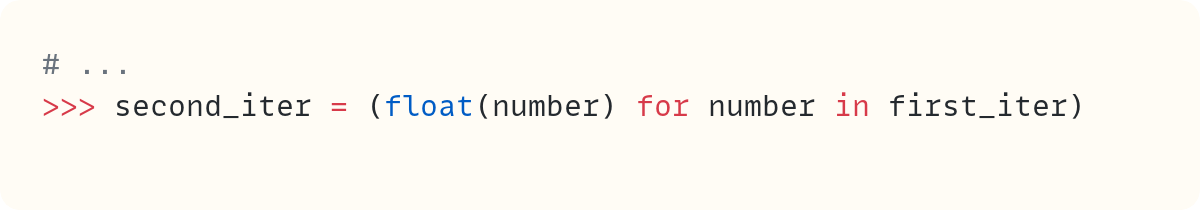

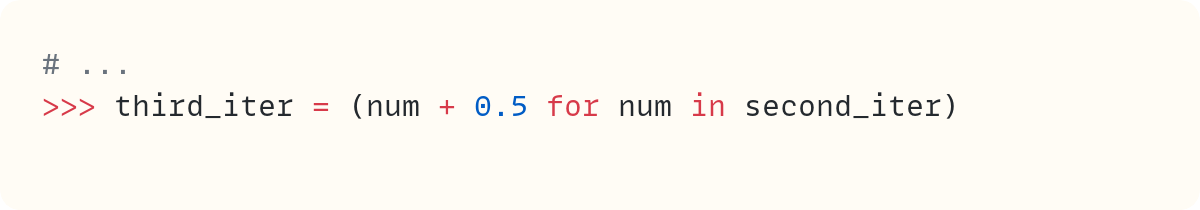

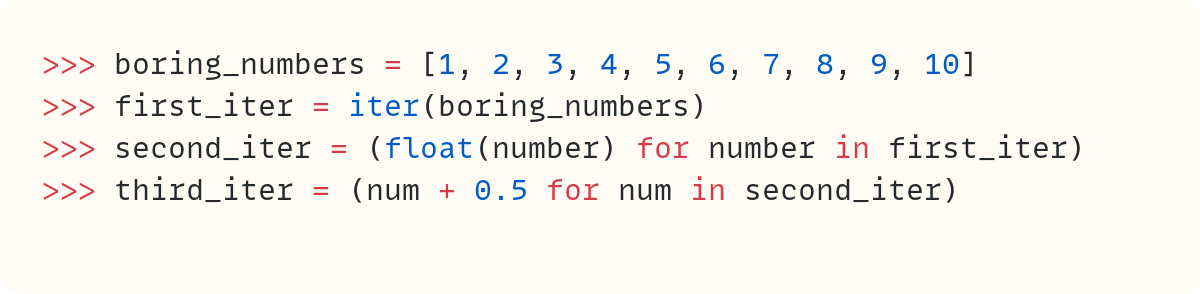

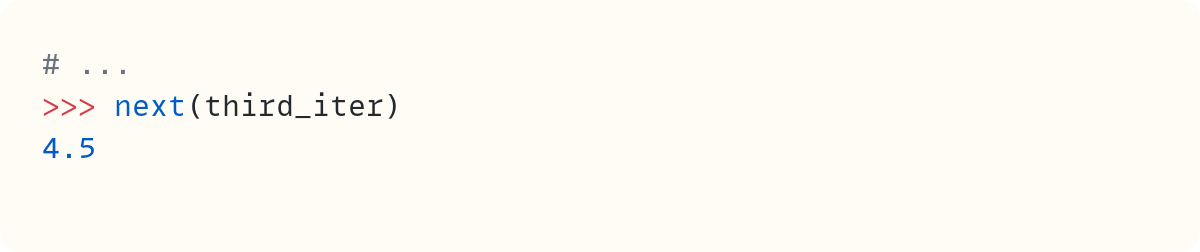

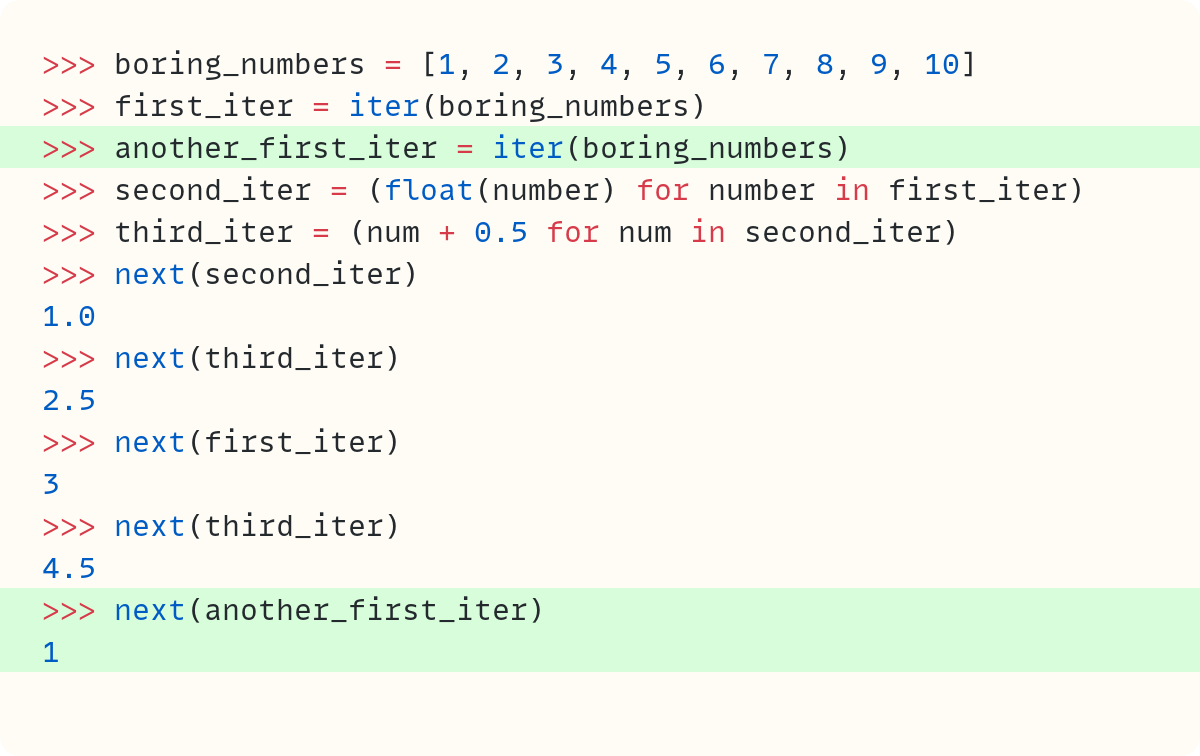

Down the Iterator Rabbit Hole

Following the trail when you have a chain of iterators

STEPHEN GRUPPETTA

Articles & Tutorials

olmOCR-2 vs PaddleOCR-VL: Which Extracts PDF Tables Better?

Compare olmOCR-2 and PaddleOCR-VL on a real arXiv PDF with dense technical tables. This article walks through a Python-based OCR workflow, then evaluates how each model handles table detection, runtime, numeric accuracy, merged cells, and multi-tier headers.

KHUYEN TRAN • Shared by Khuyen Tran

Using Typing in Python Leads to Different Sorts of Code

Chris has been moving lots of code from Python 2 to 3 and experimenting with more rigid type hints as he goes along. He’s found that keeping the type checker happy makes him write code in a different way, almost like writing in a second language.

CHRIS SIEBENMANN

Django: Introducing Django-Integrity-Policy

Recently, browsers have added support for the new Integrity-Policy response header (Firefox 145+, Chrome 138+). Adam quickly went to work to build a library that enables your Django project to take advantage of the feature.

ADAM JOHNSON

PSF Strategic Plan 2026 Draft

The Python Software Foundation board has been developing a strategic plan to guide the foundation’s direction over the next five years. The first draft has been released and they’re looking for community feedback.

PYTHON SOFTWARE FOUNDATION

EuroPython 2026 Language Summit Talks

This year’s EuroPython includes a Python Language Summit. This post highlights the talks scheduled for it, including adding Rust capabilities to CPython, an update on incremental garbage collection, and more.

EUROPYTHON.EU

Free Threading Internals: Reference Counting

This article describes how the lifetime of Python objects are tracked using reference counting and how that is effected by the changes brought about by removing the GIL.

VICTOR STINNER

Keep Your Developer Instincts When AI Writes the Code

The promise was less friction. The cost, it turns out, is instinct, a high price to pay. Bob’s answer: add deliberate practice to your routine, and keep the struggle.

BOB BELDERBOS • Shared by Bob Belderbos

How to Use GitHub Copilot Code Review in Pull Requests

Learn how to use GitHub Copilot code review on pull requests for AI-assisted feedback, one-click fixes, and project-specific custom instructions.

REAL PYTHON

Parsing XML EXIF From .avif Files (Plus a Rant)

The .avif format tends to result in smaller files, but the EXIF strippers that Andrew was using didn’t support the format, so he wrote his own.

ANDREW STEPHENS

Structuring Your Python Script

Master Python script structure with best practices for shebangs, ordered imports, formatting with Ruff, constants, and a clean entry point.

REAL PYTHON course

Projects & Code

Events

Weekly Real Python Office Hours Q&A (Virtual)

June 10, 2026

REALPYTHON.COM

Python Atlanta

June 11 to June 12, 2026

MEETUP.COM

PyDelhi User Group Meetup

June 13, 2026

MEETUP.COM

DFW Pythoneers 2nd Saturday Teaching Meeting

June 13, 2026

MEETUP.COM

DjangoCologne

June 16, 2026

MEETUP.COM

PyCon Singapore 2026

June 19 to June 22, 2026

PYCON.SG

Happy Pythoning!

This was PyCoder’s Weekly Issue #738.

View in Browser »

[ Subscribe to 🐍 PyCoder’s Weekly 💌 – Get the best Python news, articles, and tutorials delivered to your inbox once a week >> Click here to learn more ]

Python Docs Editorial Board

Meeting Minutes: Jun 9, 2026

Meeting Minutes from Python Docs Editorial Board: Jun 9, 2026

Real Python

Accessing Multiple AI Models With the OpenRouter API

One of the quickest ways to call multiple AI models from a single Python script is to use OpenRouter’s API, which acts as a unified routing layer between your code and multiple AI providers. By the end of this course, you’ll be able to access models from several providers through one unified API.

This convenience matters because the AI ecosystem is highly fragmented: each provider exposes its own API, authentication scheme, rate limits, and model lineup. Working with multiple providers often requires additional setup and integration effort, especially when you want to experiment with different models, compare outputs, or evaluate trade-offs for a specific task.

OpenRouter gives you access to thousands of models from leading providers like OpenAI, Anthropic, Mistral, Google, and Meta. You can switch between them without changing your application code.

[ Improve Your Python With 🐍 Python Tricks 💌 – Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

Quiz: Embeddings and Vector Databases With ChromaDB

In this quiz, you’ll test your understanding of Embeddings and Vector Databases With ChromaDB.

By working through this quiz, you’ll revisit key concepts like vectors, cosine similarity, word and text embeddings, ChromaDB collections, metadata filtering, and retrieval-augmented generation (RAG).

[ Improve Your Python With 🐍 Python Tricks 💌 – Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

Quiz: Accessing Multiple AI Models With the OpenRouter API

In this quiz, you’ll test your understanding of Accessing Multiple AI Models With the OpenRouter API.

By working through this quiz, you’ll revisit how OpenRouter provides a unified routing layer, how to call AI models from a single Python script, how to switch between intelligent routing and a specific model, how to prioritize providers, and how to add model fallbacks for reliability.

It also reinforces how to weigh trade-offs like cost, latency, and quality when you choose a model for your use case.

[ Improve Your Python With 🐍 Python Tricks 💌 – Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

Python Bytes

#483 Thanks Brian

<strong>Topics covered in this episode:</strong><br> <ul> <li><strong>Vulnerability and malware checks in uv</strong></li> <li><strong><a href="https://alexwlchan.net/2026/python-http-with-the-stdlib/?featured_on=pythonbytes">HTTP GET requests with the Python standard library</a></strong></li> <li><strong>Millions of AI agents imperiled by critical vulnerability in open source package</strong></li> <li><strong><a href="https://github.com/Mergifyio/alembic-git-revisions?featured_on=pythonbytes">alembic-git-revisions</a></strong></li> <li><strong>Extras</strong></li> <li><strong>Joke</strong></li> </ul><a href='https://www.youtube.com/watch?v=WIykgbceuVg' style='font-weight: bold;'data-umami-event="Livestream-Past" data-umami-event-episode="483">Watch on YouTube</a><br> <p><strong>About the show</strong></p> <p><strong>Goodbye and Thanks Brian</strong></p> <p>Thanks Calvin for being part of this and future episodes! Also new time for the live show. Thanks Brian for all the hard work over the years.</p> <p><strong>Calvin #1: Vulnerability and malware checks in uv</strong></p> <ul> <li>release just yesterday by Astral https://astral.sh/blog/uv-audit</li> <li><strong><code>uv audit</code></strong> scans dependencies for known vulnerabilities and abandoned packages via the OSV database — runs 4–10x faster than <code>pip-audit</code></li> <li><strong>Malware check</strong> runs on every install/sync, catching actively malicious packages (credential stealers, etc.) before they execute — including ones PyPI quarantined but lockfiles can still reference</li> <li>Enable malware scanning with <code>UV_MALWARE_CHECK=1</code> — it's opt-in and in preview</li> <li>Future roadmap includes a resolver that steers toward vulnerability-free versions and install-time warnings scoped to newly added deps only</li> </ul> <p><strong>Michael #2: <a href="https://alexwlchan.net/2026/python-http-with-the-stdlib/?featured_on=pythonbytes">HTTP GET requests with the Python standard library</a></strong></p> <ul> <li>If you’re doing HTTP in Python, you’re probably using one of three popular libraries: <a href="https://requests.readthedocs.io/en/latest/?featured_on=pythonbytes">requests</a>, <a href="https://github.com/encode/httpx?featured_on=pythonbytes">httpx</a>, or <a href="https://github.com/urllib3/urllib3?featured_on=pythonbytes">urllib3</a>.</li> <li>There have been <a href="https://pythonbytes.fm/episodes/show/476/common-themes">issues with httpx lately</a>.</li> <li><a href="https://github.com/jawah/niquests?featured_on=pythonbytes">Niquest</a> is another option: Drop-in replacement for Requests. Automatic HTTP/1.1, HTTP/2, and HTTP/3. WebSocket, and SSE included.</li> <li>But maybe less is more, especially in the age of agentic AI</li> <li>A good candidate needs two things to be true at once, not one: the <em>used surface</em> is small, and the <em>behavior behind that surface</em> is shallow.</li> </ul> <p><strong>Calvin #3: Millions of AI agents imperiled by critical vulnerability in open source package</strong></p> <ul> <li><strong>"BadHost" (CVE-2026-48710)</strong> is a critical vulnerability in Starlette — the ASGI framework underlying FastAPI — with 325 million weekly downloads; also affects vLLM, LiteLLM, and most MCP server tooling</li> <li><strong>The exploit is trivial</strong>: injecting a single character into an HTTP Host header bypasses path-based authentication, and can lead to credential theft, SSRF, and in some cases remote code execution</li> <li><strong>MCP servers are a prime target</strong> since they store credentials for external services (email, databases, cloud accounts) — exposed data in the wild includes biopharma clinical trial DBs, full mailboxes, HR/PII pipelines, and AWS topology</li> <li><strong>Fix is available</strong> — patch to Starlette 1.0.1 immediately; use the free scanner at mcp-scan.nemesis.services to check if your servers are still running a vulnerable version</li> <li><strong>Open source sustainability footnote</strong>: the maintainer triages near-daily security reports solo, in his free time — most are AI-generated noise, and real ones like this still compete for the same evenings and weekends</li> </ul> <p><strong>Michael #4: <a href="https://github.com/Mergifyio/alembic-git-revisions?featured_on=pythonbytes">alembic-git-revisions</a></strong></p> <ul> <li>By Julien Danjou from <a href="https://mergify.com/?featured_on=pythonbytes">Mergify</a></li> <li>Automatic <a href="https://alembic.sqlalchemy.org/?featured_on=pythonbytes">Alembic</a> migration chaining based on git commit history. No more <code>Multiple head revisions are present for given argument 'head'</code>.</li> <li>See <a href="https://julien.danjou.info/blog/fixing-alembics-multiple-heads-problem-with-git/?featured_on=pythonbytes">the introductory article</a></li> <li>Caused by two migrations landed with the same <code>down_revision</code>, and Alembic doesn’t know which one comes first. The fix is always the same: someone manually edits the migration file to re-chain the revisions.</li> <li>The insight: git already knows the order</li> </ul> <p><strong>Extras</strong></p> <p>Calvin:</p> <ul> <li>GNU <code>make</code> can do pattern matching in the target. Not new at all, mentioned in the 1994-era docs. <code>just</code> and <code>task</code> don’t have this super power on the target name yet. <pre><code>train-%: uv run ./train.py $* --save-hyper-params --overwrite $(TRAIN_ARGS) </code></pre></li> </ul> <p>Michael:</p> <ul> <li>Updated my HTTP client using packages from httpx to <a href="https://github.com/pydantic/httpx2?featured_on=pythonbytes">httpx2</a>: <a href="https://pypi.org/project/listmonk/?featured_on=pythonbytes">listmonk</a>, <a href="https://pypi.org/project/umami-analytics/?featured_on=pythonbytes">umami</a>, and <a href="https://pypi.org/project/memberful/?featured_on=pythonbytes">memberful</a>. For motivation, see <a href="https://www.reddit.com/r/Python/comments/1rl5kuq/anyone_know_whats_up_with_httpx/?featured_on=pythonbytes">this reddit thread</a>.</li> </ul> <p><strong>Joke: <a href="https://x.com/PR0GRAMMERHUM0R/status/2061508112083714478?featured_on=pythonbytes">Accurate</a></strong></p>

Hynek Schlawack

How to Ditch Codecov for Python Projects

Codecov’s unreliability breaking CI on my open source projects has been a constant source of frustration for me for years. I have found a way to enforce coverage over a whole GitHub Actions build matrix that doesn’t rely on third-party services.

June 08, 2026

Real Python

Python 3.15 Hits Feature Freeze and Other News for June 2026

While the Northern Hemisphere warms up for summer, Python 3.15 went the other way with its beta 1 feature freeze 🥶. Since May 7, the list of what will be included in the next release is final. That list includes a brand-new sentinel built-in that finally standardizes a pattern Python developers have been hand-rolling for decades.

And while AI kept writing code, buggy or not, developers also directed it to look for bugs in code that had been sitting untouched for years. The results were hundreds of bug fixes in Python’s C extensions and in Firefox. Meanwhile, in a quieter corner of the ecosystem, Pydantic forked httpx, kicking off one of the more interesting governance stories of the year.

Time to dig into the Python news from the past month!

Join Now: Click here to join the Real Python Newsletter and you’ll never miss another Python tutorial, course, or news update.

Python Releases and PEP Highlights

The 3.15 release of CPython crossed from alpha into beta, which means its feature set is now frozen, and the Steering Council cleared out a backlog of proposals before the gate closed. Two of those changes will touch the code you write every day.

Beta 1 Marks the 3.15 Feature Freeze

Last month, the eighth and final alpha rolled out as the runway to the beta phase. With Python 3.15.0b1 on May 7 came the feature freeze, which means that from here until the final release of 3.15, the core team works only on bug fixes and polishing.

That makes the beta releases a good moment to step back and look at the headline features of 3.15, which are now locked:

- Explicit lazy imports (PEP 810) for faster startup

- A

frozendictbuilt-in (PEP 814) for immutable mappings - A

sentinelbuilt-in (PEP 661), which you’ll dig into below - Unpacking in comprehensions (PEP 798)

- UTF-8 as the default encoding (PEP 686)

- A stable ABI for free-threaded builds (PEP 803), plus C-API modernization (PEPs 820 and 793) that should make it easier to write C extensions that work across Python versions

- A new sampling profiler in the standard library (PEP 799) for low-overhead profiling

The JIT compiler also gets faster, with the beta announcement citing an 8–9 percent geometric-mean improvement on x86-64 Linux. If you’ve been putting off testing your code against 3.15, then now is the time to get started! The API surface won’t shift under you anymore, and your feedback will help catch regressions before the release candidate phase.

Note: Beta builds are for testing, not production. Install the pre-release version, run your test suite against 3.15, and report anything that breaks while there’s still time to fix it before the release candidate.

The first round of improvements already landed with beta 2 on June 2, and the next big checkpoint is the release candidate phase on August 4, with the final release expected, as usual, this fall.

A Built-in sentinel Lands in Python 3.15

Here’s the new feature that you’ll likely want to reach for. If you’ve ever needed to tell the difference between a caller passing None and a caller passing nothing at all, then you’ve probably written something like this:

_MISSING = object()

def update(value=_MISSING):

if value is _MISSING:

... # No value was provided

It works, but it has rough edges. The repr() is an unhelpful <object object at 0x7f...>, the marker can’t be used cleanly in type annotations, and its identity doesn’t survive copying or pickling. PEP 661 replaces the idiom with a new sentinel built-in:

MISSING = sentinel("MISSING")

def update(value: int | MISSING = MISSING) -> None:

if value is MISSING:

... # No value was provided

The signature is sentinel(name, /, *, repr=None), and the result is a unique truthy object whose default repr() is the name you gave it, so MISSING shows up as MISSING in tracebacks instead of a memory address.

Note: Sentinels and None solve related but different problems. If you’re still fuzzy on when None is the right tool, then Real Python’s guide to Python’s None is worth revisiting.

Because the sentinel is its own type, you can drop it straight into annotations like int | MISSING without reaching for Literal. The PEP was first submitted back in 2021, so it’s satisfying to see it cross the finish line.

PEP 829 Graduates From Draft to Accepted

Last month’s roundup featured PEP 829 while it was still a draft. It’s since been accepted for Python 3.15, so the change is now official.

As a quick recap, .pth files in your site-packages directory can do two things:

- Extend

sys.path - Run arbitrary code through

importlines that Python feeds directly toexec()at startup

Read the full article at https://realpython.com/python-news-june-2026/ »

[ Improve Your Python With 🐍 Python Tricks 💌 – Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

death and gravity

Ordered key sharding in DynamoDB

So, you want to keep a sorted index in DynamoDB, but for whatever reason – usually throughput-related – it won't fit on a single partition. What do you do?

Today, we look at the available solutions, do the math, and find out which is best.

Tip

This worked example is part of my DynamoDB crash course series.

Contents- Requirements

- A sparse index is almost enough

- But scan results are not ordered

- But a single partition key causes throttling

- But random suffixes are random

- But hash suffixes are not ordered

- But there are a lot of first characters

- But some first bytes need multiple shards

- But tries and prefix ranges are complicated

- But the prefix distribution can change

Requirements #

Say you're using single table design with a table of artists, albums, and songs.1

You keep an artist's items in a single collection

(aka same partition key),

and use sort keys artist, album#{Album}, and song#{Album}#{Song},

depending on their type:

# table Music (partition key: Artist, sort key: sk)

Solar Fields: !btree

'album#Leaving Home': { Album: Leaving Home, ... }

'artist': { ... }

'song#Leaving Home#Air Song': { ... }

'song#Leaving Home#Monogram': { ... }

To list albums without doing a full table scan, you need a global secondary index.

Let's come up with some reasonable requirements; the GSI should support:

- items up 500 bytes (we project additional attributes besides the keys)

- 10,000 queries/second, max 100 items/query, sorted alphabetically

- list all albums

- list albums by title

- 10,000 writes/second (to avoid write throttling during imports)

A sparse index is almost enough #

One way to do it is to use a dedicated sparse index, taking advantage of the fact that items with missing index keys don't appear in the index.

If only albums have an Album attribute, we just create a new GSI:

# GSI Albums (partition key: Album, sort key: Artist)

Leaving Home: !btree

International Pony: { sk: 'album#Leaving Home', ... }

Solar Fields: { sk: 'album#Leaving Home', ... }

Heavy Migration: !btree

Dday One: { sk: 'album#Heavy Migration', ...}

If songs have an Album too, we add a dedicated AlbumsPK attribute instead.

In many ways, this is the ideal solution. To list all albums, we scan the index. To list albums by title, we query an index partition key. We have lots of unique partition keys with items spread pretty evenly across them, which should prevent throttling.

But scan results are not ordered #

...except scan results are not ordered, so we're missing the sorted alphabetically part.

What is ordered are sort keys, so we can use a single index collection instead:

# GSI GSI1 (partition key: gsi1pk, sort key: gsi1sk)

'albums': !btree

Heavy Migration: { Artist: Dday One, sk: 'album#Heavy Migration', ... }

Leaving Home: { Artist: Solar Fields, sk: 'album#Leaving Home', ... }

Leaving Home: { Artist: International Pony, sk: 'album#Leaving Home', ... }

This is also seemingly ideal. To list all albums, we query the entire index partition key. To list albums by title, we use a sort key. The results are sorted as required, and there's no limit on the number of items in a collection.

But a single partition key causes throttling #

However, there are per-partition limits of 24 MB/s for reads and 1 MB/s for writes.

Let's see how they compare to our requirements:

- reads: 500 bytes/item * 10k queries/s * 100 items/query = 500 MB/s (~21x)

- writes: 500 bytes/item * 10k items/s = 5 MB/s (5x)

Uh-oh, turns out we need 21 times the throughput one partition can deliver.

One way to spread the load is

sharding,

using multiple synthetic partition keys of the form album#{shard_id}.

A common option for the shard id is a random number from a known range,

e.g. album#{randrange(21)}:

# GSI GSI1 (partition key: gsi1pk, sort key: gsi1sk)

'album#1': !btree

Leaving Home: { Artist: Solar Fields, ... }

'album#12': !btree

Heavy Migration: { Artist: Dday One, ... }

'album#20': !btree

Leaving Home: { Artist: International Pony, ... }

To list all albums, query each shard in turn:

for shard in range(21):

for item in dynamodb.query(f"album#{shard}"):

yield item

But random suffixes are random #

There's a problem, though – with random shard ids we can't easily list albums by title, since albums with the same title may end up on any shard.

A better option is to calculate the shard id from the album title using a hash function:

def hash(s):

return int.from_bytes(sha256(s.encode()).digest())

def album_shard_id(album_title):

return hash(album_title) % 21

# GSI GSI1 (partition key: gsi1pk, sort key: gsi1sk)

'album#6': !btree

Leaving Home: { Artist: Solar Fields, ... }

Leaving Home: { Artist: International Pony, ... }

'album#8': !btree

Heavy Migration: { Artist: Dday One, ... }

To list albums by title:

dynamodb.query(f"album#{album_shard_id(album_title)}", sk=album_title, index='GSI1')

But hash suffixes are not ordered #

That takes care of throughput, but now results aren't sorted alphabetically anymore. We can sort items within each shard using the sort key, but they are spread uniformly across shards, and there's no order between shards.

Maybe we could use the first letter as shard id instead?

Of course, we have to account for some first letters being more frequent than others. In this case, we can approximate the actual distribution by using MusicBrainz data.

There are 5.5 million albums:

>>> import polars as pl

>>> titles = pl.read_csv(

... 'mbdump/release',

... has_header=False,

... separator='\t',

... quote_char=None,

... columns=[2],

... new_columns=['title'],

... )[:,0]

>>> titles.count()

5535986

...but only 3.3 million unique titles, partly due to different releases of the same album, partly due to some titles being more popular – a few of them, really popular:

>>> titles.value_counts(sort=True)

shape: (3_370_505, 2)

title count

Greatest Hits 4638

Demo 3140

… …

Salsa salsa 1

Glamour: Deluxe 1

>>> titles.unique_counts().quantile([.9, .99, .999, .9999, 1])

[2.0, 11.0, 58.0, 221.0, 4638.0]

Let's look at first characters:

>>> normalized = titles.str.to_lowercase().str.normalize('NFKD').sort()

>>> normalized.str.slice(0, 1).value_counts(sort=True)

shape: (5_402, 2)

title count

t 584760

s 509065

a 317513

l 298757

… …

🫀 1

🫂 1

🫧 1

1

But there are a lot of first characters #

5402?! Indeed, there's more to Unicode than the Latin alphabet:

>>> normalized.str.slice(0, 1).unique().sample(10).to_list()

['學', '舒', 'і', '进', '੦', '潮', '向', '妳', '陳', '🍅']

And it's actually worse than that – there are five thousand characters in our dataset, but there are hundreds of thousands of possible Unicode characters.

This is not a problem when adding the albums, but it is a problem when listing them, since we need to enumerate all the shards in a reasonable amount of time (and most shards being empty doesn't help, either).

As a very bad compromise, we could use the first byte of the UTF-8 encoding instead; this caps the number of shard ids at 256, and at least Latin titles would be sorted (I did say it's a bad compromise). There:

>>> firstbytes = normalized.map_elements(str.encode).bin.slice(0, 1)

>>> firstbytes.value_counts(sort=True)

shape: (136, 2)

title count

b"t" 584760

b"s" 509065

b"a" 317513

b"l" 298757

… …

b"\xd4" 2

b"U" 1

b"\xee" 1

b"\xf3" 1

But some first bytes need multiple shards #

We knew the first byte distribution would be skewed, but some of them don't even fit on a single shard (and it gets worse the more shards we need):

>>> shard_count = 21

>>> firstbytes.value_counts(sort=True).with_columns(

... pl.col('count') / (len(firstbytes) / shard_count)

... ).head()

shape: (5, 2)

title count

b"t" 2.218206

b"s" 1.931068

b"a" 1.204442

b"l" 1.133294

b"b" 1.106949

We're back to where we started: how do we sort between shards with the same prefix?

We don't – we find longer prefixes that fit in one shard.

That sounds like the perfect job for a trie (aka prefix tree). This would also allow us to switch back to characters, and merge small prefixes into ranges until each range fits one shard. But that's complicated, and as often the case, there must be a better way.2

But tries and prefix ranges are complicated #

We're looking for contiguous ranges, each of a certain size. Tries are good for finding the shortest prefix, but we don't really care about prefix length.

Why not just split the sorted titles into N equal ranges instead? This takes care of the uneven distribution:

>>> boundaries = normalized.gather_every(2000).str.slice(0, 4)

>>> boundaries.value_counts(sort=True)

shape: (2_103, 2)

title count

the 161

live 23

… …

風吹けは 1

魔法少女 1

...provided a long enough prefix:

>>> boundaries = normalized.gather_every(2000).str.slice(0, 16)

>>> boundaries.value_counts(sort=True).filter(pl.col('count') > 1)

shape: (3, 2)

title count

greatest hits 3

demo 2

the very best of 2

...almost there:

>>> boundaries = normalized.gather_every(2000).str.slice(0, 20)

>>> boundaries.value_counts(sort=True).filter(pl.col('count') > 1)

shape: (2, 2)

title count

greatest hits 3

demo 2

This highlights another problem – if the shard size is too small, there may be more than a shard's worth of albums with identical titles; we can fix this by using another, random suffix (ordering doesn't matter anymore, since they have the same title).

Thankfully, our shards are huge, so it's not an issue:

>>> shard_size = int(math.ceil(len(normalized) / shard_count))

>>> shard_size

263619

>>> boundaries = normalized.gather_every(shard_size).str.slice(0, 20)

To use this, save the list of boundaries in code, and find the index of the biggest boundary smaller than a given album title:

import bisect

import unicodedata

ALBUM_TITLE_BOUNDARIES = [

'', # replaced with the smallest possible string

'agartha',

'barstow / crazy',

'can you feel it',

'cyan rot',

'dreams take over eve',

'feud semiotics (rb. ',

'grave poetry',

'i live',

'kannaval',

'live in florence',

"mir ist's gleich / i",

'notice',

'platforms ep',

'rituals',

'skylten',

'surtr / absorbed',

'the human touch',

'tonttujen jouluyö: ',

'walking away',

'голос',

]

def album_shard_id(album_title):

normalized = unicodedata.normalize('NFKD', album_title.lower())

return bisect.bisect(ALBUM_TITLE_BOUNDARIES, normalized) - 1

>>> album_shard_id('2 Pie Island')

0

>>> album_shard_id('Heavy Migration')

7

>>> album_shard_id('Leaving Home')

9

>>> album_shard_id('Space Cadet')

15

But the prefix distribution can change #

We were lucky to have data on the prefix distribution, but that's not always the case, and even if it is, the distribution can change over time.

For example, the last of the 21 shards above starts in the Cyrillic Unicode block, which means most existing scripts go into a single shard. What if we import 1 million Chinese and Japanese albums at some point?

One way to deal with this is to give more weight to known gaps in the data. Another is to have more shards from the start to account for unknown gaps – 210 shards instead of 21 sounds pretty reasonable.

If all else fails, you can move to a new index with more shards, but that comes with its own complications, so it's best to get it roughly right from the start.

Anyway, that's it for now.

Learned something new today? Share it with others, it really helps!

Want to know when new articles come out? Subscribe here to get new stuff straight to your inbox!

This is a simplified example; as the MusicBrainz database shows, the schema for this kind of thing would be way more complicated in practice. [return]

You're welcome to try, though, especially if you're preparing for an interview. [return]

Wingware

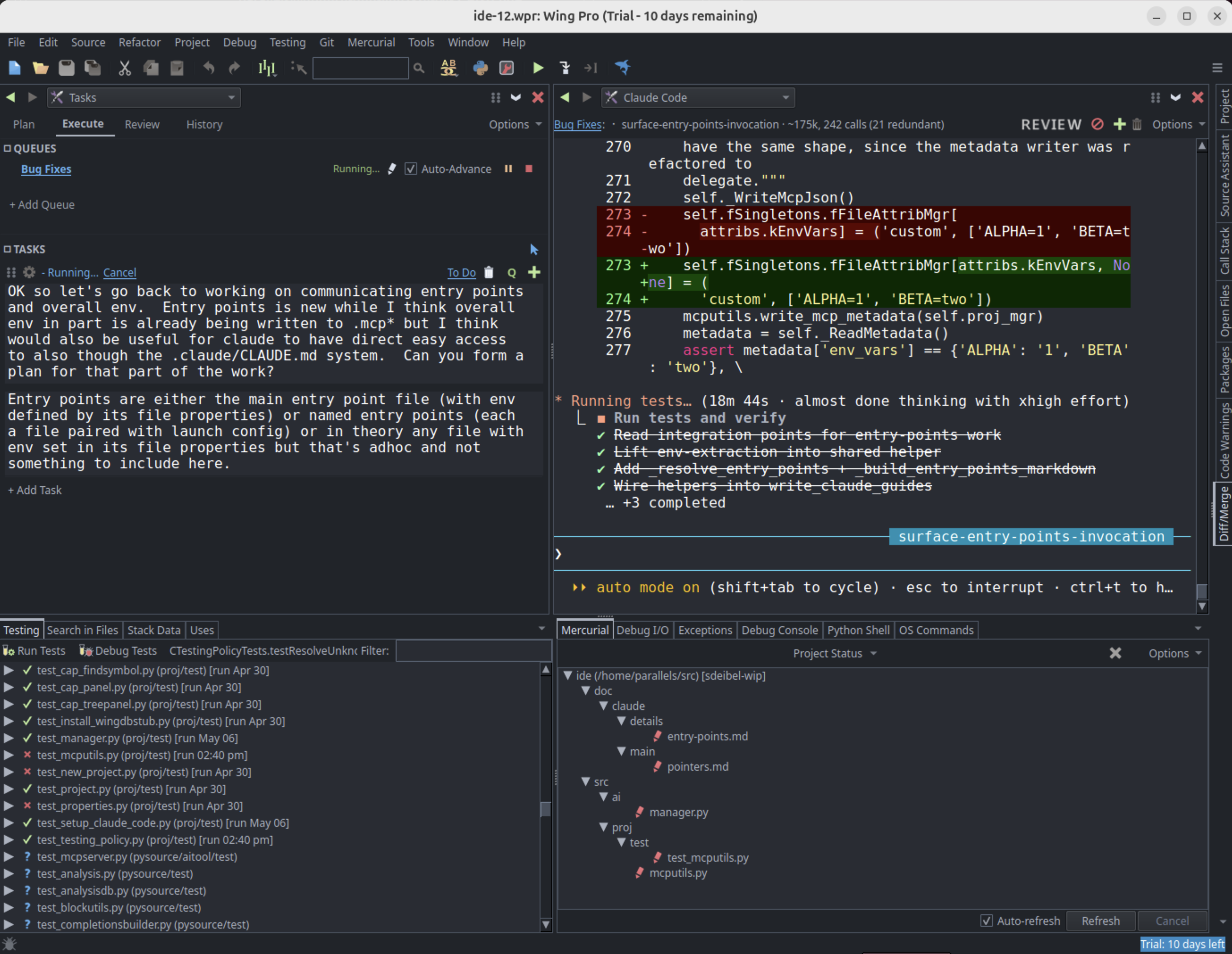

Wing Python IDE 12 Early Access - June 8, 2026

Wing 12 is now available as an early access release that focuses on AI agent driven development. Wing 12 introduces deep integration with Claude Code, including a dedicated Claude Code tool, a new Tasks tool for planning, executing, and reviewing AI agent work, and a set of MCP servers that allow agents to work more efficiently by giving them access to Wing's source code analysis, unit testing, and debugger features.

For those using Claude Code, Wing 12 broadens the focus from code-centric direct development to also include managing multiple concurrent AI agents. Of course all of Wing's classic IDE features are still available -- the powerful debugger, deep code analysis and warnings, full-featured editor, project & package management, and much more.

Wing 12 also adds true pseudo-terminal support to OS Commands and Debug I/O, reenvisions the OS Commands tool so that commands can be dragged to tool or editor splits, reorganizes the Tools menu, adds search and back/forward navigation to the Preferences dialog, supports automatic test discovery, speeds up detection of externally modified files, and makes many other improvements.

For more information, see the Wingware Early Access Program. Anyone can participate just by downloading the release.

If you have questions, please don't hesitate to contact us at support@wingware.com.

June 07, 2026

Eli Bendersky

Plugins case study: mdBook preprocessors

mdBook is a tool for easily creating books out of Markdown files. It's very popular in the Rust ecosystem, where it's used (among other things) to publish the official Rust book.

mdBook has a simple yet effective plugin mechanism that can be used to modify the book output in arbitrary ways, using any programming language or tool. This post describes the mechanism and how it aligns with the fundamental concepts of plugin infrastructures.

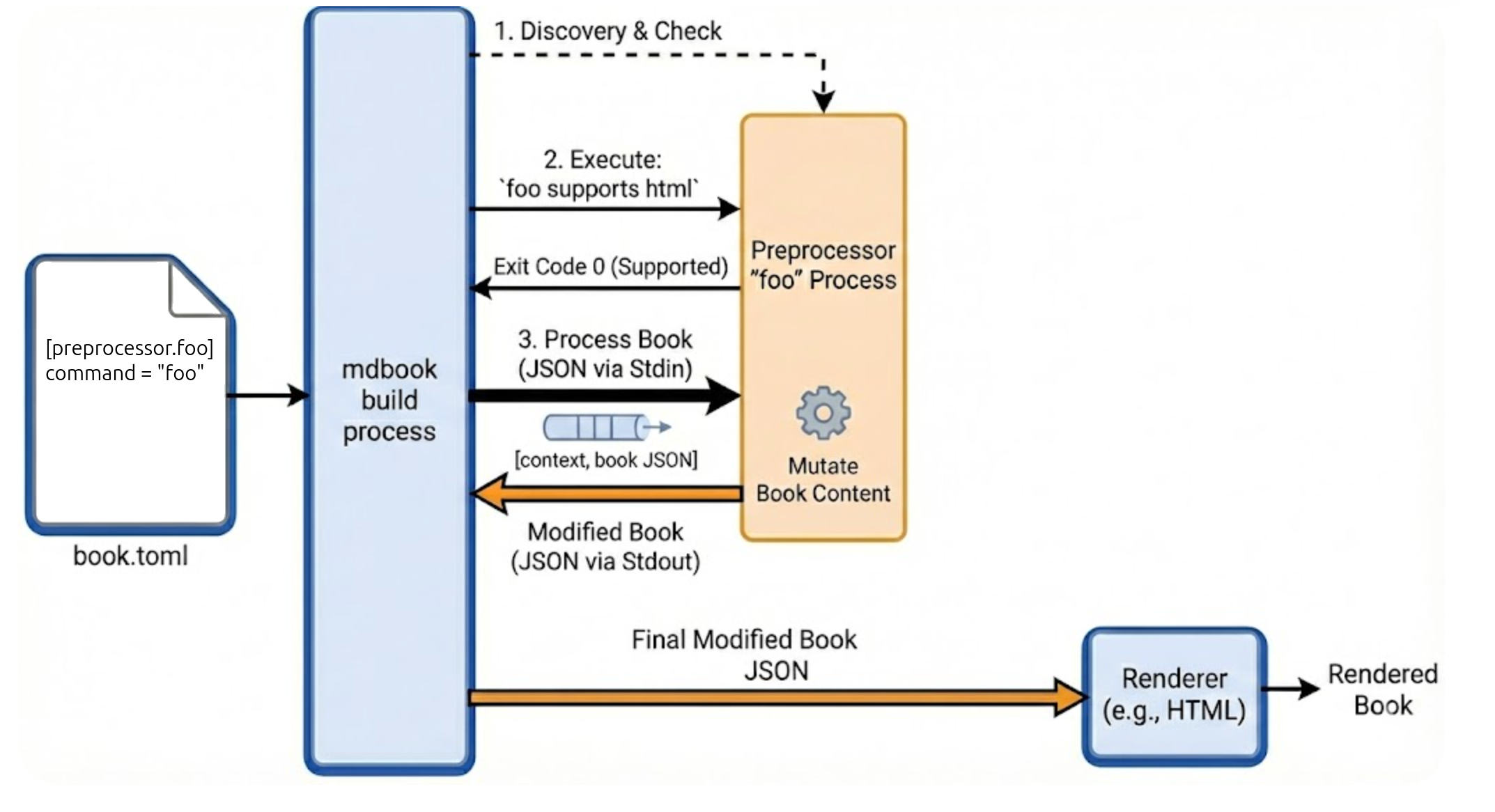

mdBook preprocessors

mdBook's architecture is pretty simple: your contents go into a directory tree of Markdown files. mdBook then renders these into a book, with one file per chapter. The book's output is HTML by default, but mdBook supports other outputs like PDF.

The preprocessor mechanism lets us register an arbitrary program that runs on the book's source after it's loaded from Markdown files; this program can modify the book's contents in any way it wishes before it all gets sent to the renderer for generating output.

The official documentation explains this process very well.

Sample plugin

I rewrote my classical "nacrissist" plugin for mdBook; the code is available here.

In fact, there are two renditions of the same plugin there:

- One in Python, to demonstrate how mdBook can invoke preprocessors written in any programming language.

- One in Rust, to demonstrate how mdBook exposes an application API to plugins written in Rust (since mdBook is itself written in Rust).

Fundamental plugin concepts in this case study

Let's see how this case study of mdBook preprocessors measures against the Fundamental plugin concepts that were covered several times on this blog.

Discovery

Discovery in mdBook is very explicit. For every plugin we want mdBook to use, it has to be listed in the project's book.toml configuration file. For example, in the code sample for this post, the Python narcissist plugin is noted in book.toml as follows:

[preprocessor.narcissistpy]

command = "python3 ../preprocessor-python-narcissist/narcissist.py"

Each preprocessor is a command for mdBook to execute in a sub-process. Here it uses Python, but it can be anything else that can be validly executed.

Registration

For the purpose of registration, mdBook actually invokes the plugin command twice. The first time, it passes the arguments supports <renderer> where <renderer> is the name of the renderer (e.g. html). If the command returns 0, it means the preprocessor supports this renderer; otherwise, it doesn't.

In the second invocation, mdBook passes some metadata plus the entire book in JSON format to the preprocessor through stdin, and expects the preprocessor to return the modified book as JSON to stdout (using the same schema).

Hooks

In terms of hooks, mdBook takes a very coarse-grained approach. The preprocessor gets the entire book in a single JSON object (along with a context object that contains metadata), and is expected to emit the entire modified book in a single JSON object. It's up to the preprocessor to figure out which parts of the book to read and which parts to modify.

Given that books and other documentation typically have limited sizes, this is a reasonable design choice. Even tens of MiB of JSON-encoded data are very quick to pass between sub-processes via stdout and marshal/unmarshal. But we wouldn't be able to implement Wikipedia using this design.

Exposing an application API to plugins

This is tricky, given that the preprocessor mechanism is language-agnostic. Here, mdBook only offers additional utilities to preprocessors implemented in Rust. These get access to mdBook's API to unmarshal the JSON representing the context metadata and book's contents. mdBook offers the Preprocessor trait Rust preprocessors can implement, which makes it easier to wrangle the book's contents. See my Rust version of the narcissist preprocessor for a basic example of this.

Renderers / backends

Actually, mdBook has another plugin mechanism, but it's very similar conceptually to preprocessors. A renderer (also called a backend in some of mdBook's own doc pages) takes the same input as a preprocessor, but is free to do whatever it wants with it. The default renderer emits the HTML for the book; other renderers can do other things.

The idea is that the book can go through multiple preprocessors, but at the end a single renderer.

The data a renderer receives is exactly the same as a preprocessor - JSON encoded book contents. Due to this similarity, there's no real point getting deeper into renderers in this post.

June 06, 2026

Armin Ronacher

Communities of Not

There is a strange thing that happens in communities that gather around abstinence from something: identity from opposition. At their best these communities are not just negative: childfree spaces can be about autonomy, choice and acceptance, anti-car spaces about safer streets and transit, and LLM-skeptical developer spaces about the future of labor, code quality and slop1. But the thing being refused often does not go away and instead becomes the main subject of the community’s identity.

That would be fine if it stayed at criticism, maybe even angry criticism, but more often than not it turns into policing and hatred towards others. An influencer without children becomes a parent, an urban bike commuter by choice buys a Porsche, a respected developer tries LLMs, and the community feels betrayed because it assumed they were members of the same tribe. The expulsion of that person (who never signed up to be a community member) is entirely imaginary but the punishment that the community unleashes is not: people pile on and shame them, quote them out of context and turn their weakest moments into proof that the person was always unserious, a sharlatan or should not be listened to.

I do not think the answer is to tell people to stop paying attention. Cars shape cities even for people who cycle, children influence politics, workplaces and taxes even for people who do not have them. For us developers, LLMs show up in editors, issue trackers, hiring conversations, management pressure and code reviews whether we asked for them or not. Resisting that can be legitimate but that is no excuse for using one’s rejection to justify shitty mob behavior.

I understand the thinking all too well, because I have done versions of this myself in the past. It took me a while to become more accepting of other people’s worldviews that diverge from mine. Whatever insecurities we have, finding a group of others sharing them can be comforting. The danger is that being part of a crowd of negativity can easily make us part of collective harassment.

I can only encourage you to breathe, slow down, de-escalate when given the chance, and resist the temptation to always assume the most catastrophic reading. Default to being open to new things. Being negative towards something, and making that ones identity, is an easy trap to fall into.

-

These examples are not meant as equivalents. The recent mob against rsync is the LLM version that prompted this post. I picked the others because I’m familiar with those communities and they all show similar cases of personal choices being interpreted as betrayal.↩

June 05, 2026

Kay Hayen

Nuitka Release 4.1

This is to inform you about the new stable release of Nuitka. It is the extremely compatible Python compiler, “download now”.

This release adds many new features and corrections with a focus on async code compatibility, missing generics features, and Python 3.14 compatibility and Python compilation scalability yet again.

Bug Fixes

Python 3.14: Fix, decorators were breaking when disabling deferred annotations. (Fixed in 4.0.1 already.)

Fix, nested loops could have wrong traces lead to mis-optimization. (Fixed in 4.0.1 already.)

Plugins: Fix, run-time check of package configuration was incorrect. (Fixed in 4.0.1 already.)

Compatibility: Fix,

__builtins__lacked necessary compatibility in compiled functions. (Fixed in 4.0.1 already.)Distutils: Fix, incorrect UTF-8 decoding was used for TOML input file parsing. (Fixed in 4.0.1 already.)

Fix, multiple hard value assignments could cause compile time crashes. (Fixed in 4.0.1 already.)

Fix, string concatenation was not properly annotating exception exits. (Fixed in 4.0.2 already.)

Windows: Fix,

--verbose-outputand--show-modules-outputdid not work with forward slashes. (Fixed in 4.0.2 already.)Python 3.14: Fix, there were various compatibility issues including dictionary watchers and inline values. (Fixed in 4.0.2 already.)

Python 3.14: Fix, stack pointer initialization to

localspluswas incorrect to avoid garbage collection issues. (Fixed in 4.0.2 already.)Python 3.12+: Fix, generic type variable scoping in classes was incorrect. (Fixed in 4.0.2 already.)

Python 3.12+: Fix, there were various issues with function generics. (Fixed in 4.0.2 already.)

Python 3.8+: Fix, names in named expressions were not mangled. (Fixed in 4.0.2 already.)

Plugins: Fix, module checksums were not robust against quoting style of module-name entry in YAML configurations. (Fixed in 4.0.2 already.)

Plugins: Fix, doing imports in queried expressions caused corruption. (Fixed in 4.0.2 already.)

UI: Fix, support for

uv_buildin the--projectoption was broken. (Fixed in 4.0.2 already.)Compatibility: Fix, names assigned in assignment expressions were not mangled. (Fixed in 4.0.2 already.)

Python 3.12+: Fix, there were still various issues with function generics. (Fixed in 4.0.3 already.)

Clang: Fix, debug mode was disabled for clang generally, but only ClangCL and macOS Clang didn’t want it. (Fixed in 4.0.3 already.)

Zig: Fix,

--windows-console-mode=attach|disablewas not working when using Zig. (Fixed in 4.0.3 already.)macOS: Fix, yet another way self dependencies can look like, needed to have support added. (Fixed in 4.0.3 already.)

Python 3.12+: Fix, generic types in classes had bugs with multiple type variables. (Fixed in 4.0.3 already.)

Scons: Fix, repeated builds were not producing binary identical results. (Fixed in 4.0.3 already.)

Scons: Fix, compiling with newer Python versions did not fall back to Zig when the developer prompt MSVC was unusable, and error reporting could crash. (Fixed in 4.0.4 already.)

Zig: Fix, the workaround for Windows console mode

attachordisablewas incorrectly applied on non-Windows platforms. (Fixed in 4.0.4 already.)Standalone: Fix, linking with Python Build Standalone failed because

libHacl_Hash_SHA2was not filtered out unconditionally. (Fixed in 4.0.4 already.)Python 3.6+: Fix, exceptions like

CancelledErrorthrown into an async generator awaiting an inner awaitable could be swallowed, causing crashes. (Fixed in 4.0.4 already.)Fix, not all ordered set modules accepted generators for update. (Fixed in 4.0.5 already.)

Plugins: Disabled warning about rebuilding the

pytokensextension module. (Fixed in 4.0.5 already.)Standalone: Filtered

libHacl_Hash_SHA2from link libs unconditionally. (Fixed in 4.0.5 already.)Debugging: Disabled unusable unicode consistency checks for Python versions 3.4 to 3.6. (Fixed in 4.0.5 already.)

Python3.12+ Avoided cloning call nodes on class level which caused issues with generic functions in combination with decorators. (Added in 4.0.5 already.)

Python 3.12+: Added support for generic type variables in

async deffunctions. (Added in 4.0.5 already.)UI: Fix, flushing outputs for prompts was not working in all cases when progress bars were enabled. (Fixed in 4.0.6 already.)

UI: Fix, unused variable warnings were missing at C compile time when using

zigas a C compiler. (Fixed in 4.0.6 already.)Scons: Fix, forced stdout and stderr paths as a feature was broken. (Fixed in 4.0.6 already.)

Fix, replacing a branch did not accurately track shared active variables causing optimization crashes. (Fixed in 4.0.7 already.)

macOS: Fix, failed to remove extended attributes because files need to be made writable first. (Fixed in 4.0.7 already.)

Fix, dict

popandsetdefaultusing with:=rewrites lacked exception-exit annotations for un-hashable keys. (Fixed in 4.0.8 already.)Python 3.13: Fix, the

__parameters__attribute of generic classes was not working. (Fixed in 4.0.8 already.)Python 3.11+: Fix, starred arguments were not working as type variables. (Fixed in 4.0.8 already.)

Python2: Fix,

FileNotFoundErrorcompatibility fallback handling was not working properly. (Fixed in 4.0.8 already.)Compatibility: Fix, loop ownership check in value traces was missing, causing issues with nested loops.

Windows: Improved

--windows-console-mode=attachto properly handle console handles, enabling cases likeos.systemto work nicely.Python2: Fix, there was a compatibility issue where providing default values to the

mkdtempfunction was failing.Windows: Fix, there were spurious issues with C23 embedding in 32-bit MinGW64 by switching to

coff_objresource mode for it as well.Plugins: Fix, the

post-import-codeexecution could fail because the triggering sub-package was not yet available insys.modules.UI: Fix, listing package DLLs with

--list-package-dllswas broken due to recent plugin lifecycle changes.UI: Fix,

--list-package-exewas not working properly on non-Windows platforms failing to detect executable files correctly.UI: Handled paths starting with

{PROGRAM_DIR}the same as a relative path when parsing the--onefile-tempdir-specoption.Plugins: Followed multiprocessing

forkserverchanges for newer Python versions.Python 3.12+: Fix, generic class type parameters handling was incorrect.

Python 3.12: Fix, deferred evaluation of type aliases was failing.

Python 3.12+: Aligned

sumbuilt-in float summation with CPython’s compensated sum for better accuracy.Python 3.10+: Fix, uncompiled coroutine

throw()return handling was incorrect, restoring completed coroutine results viaStopIteration.valuerather than exposing them as ordinary return values to the outer await chain.Python 3.13+: Fix, uncompiled coroutine

cancel()/awaitsuspension handling was incorrect, improved to ensure integration compatibility.macOS: Made finding

create-dmgmore robustly by also checking the Homebrew path for Intel and fromPATHproperly.Compatibility: Fix, class frames were not exposing frame locals.

UI: Detected

static-libpythonproblems, which affected some forms of Anaconda.Distutils: Rejected

--projectmixed with--mainarguments as it is not useful.macOS: Fix,

zigfromPATHor fromziglangwas not being used.Distutils: Fix, the wrong

module-rootconfig value was being checked foruvbuild backend.macOS: Fix, was attempting to change removed (rejected) DLLs, which of course failed and errored out.

Python 3.14: Fix, tuple reuse was not fully compatible, potentially causing crashes due to outdated hash caches.

Fix, fake modules were still being attempted to located when imported by other code, which could conflict with existing modules.

Python 3.5+: Fix, failed to send uncompiled coroutines the sent in value in

yield from.Fix, older

gcccompilers lacking newer intrinsic methods had compilation issues that needed to be addressed.Standalone: Fix, multiphase module extension modules with post-load code were not working properly.

Fix, Avoid using the non-inline copy of

pkg_resourceswith the inline copy of Jinja2. These could mismatch and cause errors.Fix, loops could make releasing of previous values very unclear, causing optimization errors.

Fix,

incbinresource mode was not working with oldgccC++ fallback.Python 3.4 to 3.6: Fix, bytecode demotion was not working properly for these versions, also bytecode only files not working.

Plugins: Added a check for the broken

patchelfversions 0.10 and 0.11 to prevent breaking Qt plugins.Android: Allowed

patchelfversion 0.18 on Android.Windows: Fix, the header path for self uninstalled Python was not detected correctly.

Release: Fix, inclusion of the

pkg_resourcesinline copy for Python 2 to source distributions was missing.UI: Detected the OBS versions of SUSE Linux better.

Suse: Allowed using

patchelf0.18.0 there too.Python 3.11: Fix, package and module dicts were not aligned close enough to avoid a CPython bug.

Fix, unbound compiled methods could crash when called without an object passed.

Standalone: Fix, multiphase module extension modules with postload. (Fixed in 4.0.8 already.)

Onefile: Fix, while waiting for the child, it may already be terminated.

macOS: Removed existing absolute rpaths for Homebrew and MacPorts.

Python 3.14: Avoided warning in CPython headers.

Python 3.14: Followed allocator changes more closely.

Compatibility: Avoided using

pkg_resourcesfor Jinja2 template location for loading.No-GIL: Applied some bug fixes to get basic things to work.

Package Support

Standalone: Add support for newer

paddleversion. (Added in 4.0.1 already.)Standalone: Add workaround for refcount checks of

pandas. (Fixed in 4.0.1 already.)Standalone: Add support for newer

h5pyversion. (Added in 4.0.2 already.)Standalone: Add support for newer

scipypackage. (Added in 4.0.2 already.)Plugins: Revert accidental

os.getenvoveros.environ.getchanges in anti-bloat configurations that stopped them from working. Affected packages arenetworkx,persistent, andtensorflow. (Fixed in 4.0.5 already.)Standalone: Added missing DLLs for

openvino. (Added in 4.0.7 already.)Enhanced the package configuration YAML schema by adding the

relative_toparameter forfrom_filenamesDLL specification, avoiding error-prone purely relative paths.Standalone: Fix,

flet_desktopapp assets were missing, now preserving the packaged runtime and sidecar DLLs.Standalone: Added support for the

tyropackage.Standalone: Added data files for the

perfettopackage.Standalone: Added support for

anyioprocess forking.Standalone: Added support for the

plotly.graphpackage.Anaconda: Fix, dependencies for the

numpyconda package on Windows were incorrect.Plugins: Enhanced the auto-icon hack in PySide6 to use compatible class names.

Standalone: Fix, Qt libraries were duplicated with

PySide6WebEngine framework support on macOS.Plugins: Fix, automatic detection of

mypycruntime dependencies was including all top level modules of the containing package by accident. (Fixed in 4.0.5 already.)Anaconda: Fix,

delvewheelplugin was not working with Python 3.8+. This enhances compatibility with installed PyPI packages that use it for their DLLs. (Fixed in 4.0.6 already.)Plugins: Fix, our protection workaround could confuse methods used with

PySide6.

New Features

UI: Added the

--recommended-python-versionoption to display recommended Python versions for supported, working, or commercial usage.UI: Add message to inform users about

Nuitka[onefile]if compression is not installed. (Added in 4.0.1 already.)UI: Add support for

uv_buildin the--projectoption. (Added in 4.0.1 already.)Onefile: Allow extra includes as well. (Added in 4.0.2 already.)

UI: Add

nuitka-project-setfeature to define project variables, checking for collisions with reserved runtime variables. (Added in 4.0.2 already.)Scons: Added new option to select

--reproduciblebuilds or not. (Added in 4.0.6 already.)Python 3.10+: Added support for

importlib.metadata.package_distributions(). (Added in 4.0.8 already.)Plugins: Added support for the multiprocessing

forkservercontext. (Added in 4.0.8 already, for 4.1 Python 3.6 and earlier, as well as 3.14 support were added too.)Reports: Added structured resource usage (

rusage) performance information to compilation reports.Reports: Included individual module-level C compiler caching (

ccache/clcache) statistics in compilation reports.Added support for detecting and correctly resolving the Python prefix for the

PyEnv on HomebrewPython flavor.macOS: Added support for

rusageinformation for Scons.UI: Added the

__compiled__.extension_filenameattribute to give the real filename of the containing extension module.Windows: Added support for

--clangor ARM. (Added in 4.0.8 already.)Windows: Added support for resources names as not just integers, important when we copy them from template files.

MacPorts: Added basic support for this Python flavor. More work will be needed to get it to work fully though.

Optimization

Avoid including

importlib._bootstrapandimportlib._bootstrap_external. (Added in 4.0.1 already.)Linux: Cached the

syscallused for time keeping during compilation to avoid loadinglibcfor each trace. (Added in 4.0.8 already.)UI: Output a warning for modules that remain unfinished after the third optimization pass.

Added an extra micro pass trigger when new variables are introduced or variable usage changes severely, ensuring optimizations are fully propagated, avoiding unnecessary extra full passes.

Provided scripts to compile Python statically with PGO tailored for Nuitka on Linux, Windows, and macOS.

Added support for running the Data Composer tool from a compiled Nuitka binary without spawning an uncompiled Python process.

Enhanced the usage of

vectorcallforPyCFunctionobjects by directly checking for its presence instead of relying purely on flags, allowing more frequent use of this faster execution path.Cached frequently used declarations for top-level variables to speed up C code generation.

Sped up trace collection merging by avoiding unnecessary set creation and using a set instead of a list for escaped traces.

Optimized plugin hook execution by tracking overloaded methods and added an option to show plugin usage statistics.

Improved performance of module location by avoiding unnecessary module name reconstruction and redundant filesystem checks for pre-loaded packages.

Improved the caching of distribution name lookups to effectively avoid repeated IO operations across all package types.

Plugins: Cached callback plugin dispatch for

onFunctionBodyParsingandonClassBodyParsingto skip argument computation when no plugin overrides them.Python 3.13: Handled sub-packages of

pathlibas hard modules.Handled hard attributes through merge traces as well.

Made constant blobs more compact by avoiding repeated identifiers and unnecessary fields.

Enhanced Python compilation scripts further. (Fixed in 4.0.8 already.)

Recognized late incomplete variables better. (Fixed in 4.0.8 already.)

Made constant blobs more compact. (Fixed in 4.0.8 already.)

Optimized calls with only constant keywords and variable posargs too.

Anti-Bloat

Fix, memory bloat occurred when C compiling

sqlalchemy. (Fixed in 4.0.2 already.)Avoid using

pydocinPySimpleGUI. (Added in 4.0.2 already.)Avoided using

doctestfromzodbpickle. (Added in 4.0.5 already.)Avoided inclusion of

cythonwhen usingpyav. (Added in 4.0.7 already.)Avoided including

typing_extensionswhen usingnumpy. (Added in 4.0.7 already.)

Organizational

UI: Relocated the warning about the available source code of extension modules to be evaluated at a more appropriate time.

Debian: Remove recommendation for

libfuse2package as it is no longer useful.Debian: Used

platformdirsinstead ofappdirs.Debugging: Removed Python 3.11+ restriction for

clang-formatas it is available everywhere, even Python 2.7, and we still want nicely formatted code when we read things. (Added in 4.0.6 already.)Removed no longer useful inline copy of

wax_off. We have our own stubs generator project.Release: Added missing package to the CI container for building Nuitka Debian packages.

Developer: Updated AI instructions for creating Minimal Reproducible Examples (MRE) to skip unneeded C compilation.

Debugging: Added an internal function for checking if a string is a valid Python identifier.

AI: Added a task in Visual Studio Code to export the currently selected Python interpreter path to a file, making it available as “python” and “pip” matching the selected interpreter. This makes it easier to use a specific version with no instructions needed.

AI: Updated the rules to instruct AI to only generate useful comments that add context not present in the code.

Containers: Added template rendering support for Jinja2 (

.j2) container files in our internal Podman tools.Projects: Clarified the current status and rationale of Python 2.6 support in the developer manual.

Debugging: Added experimental flag

--experimental=ignore-extra-micro-passto allow ignoring extra micro pass detection.Visual Code: Added integration scripts for

bashandzshautocompletion of Nuitka CLI options. These are now also integrated into Visual Studio Code terminal profiles and the Debian package.RPM: Included the Python compile script for Linux.

RPM: Removed the requirement for

distutilsin the spec.

Tests